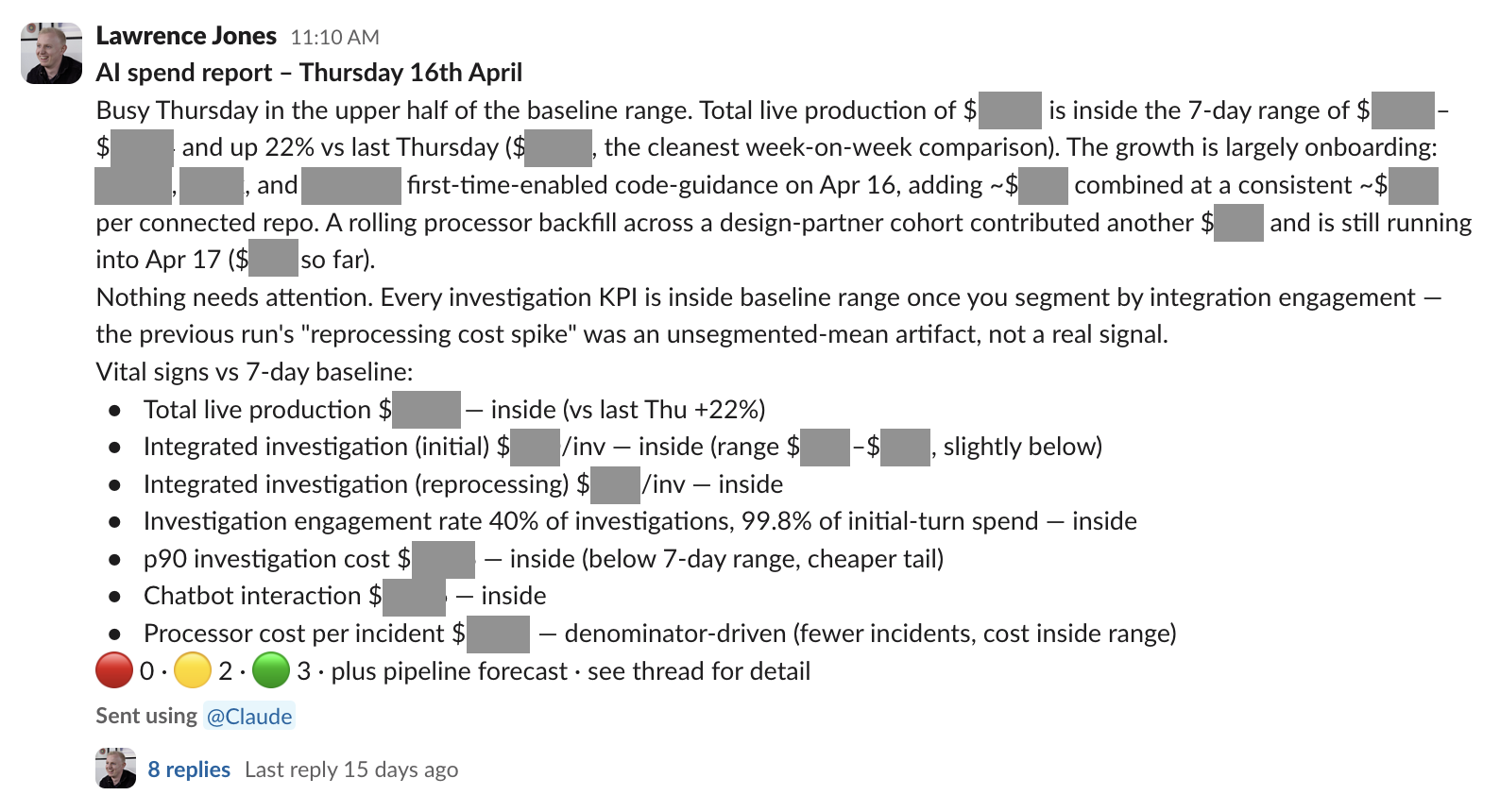

Every weekday morning, someone on the AI team at incident.io kicks off our spend report skill. Claude pulls a dozen BigQuery queries, computes a 7-day baseline range for the KPIs we track, hunts for anomalies (runaway processors, mis-tagged spend, onboarding spikes) and posts a structured report into our #ai-costs-pulse channel.

The skill exists because AI inference costs are growing fast and don’t reduce neatly to a single graph. Spend by feature, by prompt, by org, by initial-turn vs reprocessing, by internal vs customer — there are too many dimensions to dashboard sensibly, and even with the right charts the more useful question is usually why a number moved rather than what the number was. The skill produces the kind of analysis someone on the team would have written by hand if they had a spare half-hour each morning.

I want to walk through how I built it, because I think the process matters more than the artefact you end up with.

Why first drafts fail

A skill is, mechanically, a markdown file in a known location that an agent reads and executes. It’s a runbook — the kind of document a teammate might write to explain how they handle a recurring piece of work — except the audience is an agent rather than another human, and the document gets read end-to-end before each run rather than skimmed when someone gets stuck.

This is one of the more interesting things to have happened to documentation in a long time. Markdown that used to be a static reference is now closer to executable code: dense, specific, and load-bearing. Documentation has become more valuable than it was, and harder to get right.

You could, in principle, just ask Claude to write a skill for you — describe the process in a couple of sentences, ask for a runbook, and call it done. I haven’t found that works very well.

The best book I’ve read on writing this kind of executable process documentation is Atul Gawande’s The Checklist Manifesto. Gawande’s domain was surgery, aviation and construction — not software — but the book is fundamentally about what makes a piece of process documentation reliably followable by experts under load, and most of its conclusions transfer.

The takeaway that matters most for skill-writing is that you’re not done with a checklist when you’ve written it. You’re done when you’ve iterated on it through real use, watched it fail, and rewritten the items it got wrong. The WHO surgical safety checklist went through dozens of operating-theatre trials before publication — items dropped because they turned out to be noise, items added because they addressed real failure modes the first draft hadn’t covered, and most of the value came from the iteration rather than the writing.

I think the same is true for AI skills. The first draft you write reflects the steps you remembered, which isn’t quite the same as the steps an agent needs to be told. Closing that gap takes the same kind of iterative testing.

Solve the problem first

I spent the first couple of hours not writing a skill at all. I opened a Claude session and asked it to analyse the previous day’s spend.

Please run an analysis of app/ai/docs/ai-spend.md for the last day (April 16th) of spend across all elements of AI spending.

Once Claude had pulled the basic numbers, I asked for more.

Can you do a deep dive into this? I want to understand:

- Can we review all our tags and suggest which tags we should add or adjust

- Does any of this spend look like something that is unusual or caused by bugs/runaway processing

- Why are we going via Sonnet 4.5 for anything? We should be using 4.6 in Vertex for all our stuff

From here the work got more interesting. We spent the next hour and a bit arguing through the data — what each tag_feature actually meant in business terms, what counted as real spend versus internal noise from engineers running experiments, and where margins lived. Halfway through, we hit a tagging issue: code-guidance generation was being categorised as backfill when it was actually customer-facing onboarding traffic, while subsequent generations belonged in our processor surface. After a back-and-forth I landed on a concrete proposal.

How do you feel about creating a new feature of code-guidance that represents generating guidance for a repository, and then we use the source as either backfill if it’s an initial generation or processor if it’s subsequent?

We fixed the underlying dbt model in the same session. Other things came up too: a missing column (turn_phase hadn’t been wired through yet), some prompts still routing through Sonnet 4.5 when they should have moved to 4.6 on Vertex, and a bimodal distribution in investigation cost that I hadn’t realised was hiding the real signal.

None of this could have been written into a skill ahead of time, because none of it was known ahead of time. If I had skipped to drafting the skill from memory, I would have encoded my pre-existing assumptions — the wrong tagging, the missed routing, the bimodal distribution I hadn’t noticed — and the skill would have looked plausible while producing misleading reports.

After a couple of hours of this I had a report I trusted, and the principle I wanted the skill to start with: never report a flag without investigating it. Only then did I ask Claude to write the skill itself.

yes, let’s draft this skill. I want to ensure that if we find any organisation or prompt or pattern that looks anomalous, we genuinely dig into what has driven that spend increase, whether it’s backfills or a bug or etc

Extract reference into the codebase

I’d recommend extracting the reference content — data model, queries, taxonomies, anything an agent might need to look up — into separate files in your codebase, and keeping the skill itself focused on the orchestration logic that points at them.

For the spend report, all of the data model description, canonical BigQuery queries, tag taxonomy, and the list of internal orgs to filter out went into server/app/ai/docs/ai-spend.md, with investigation-specific details in a sibling doc. About 1,600 lines between them. The skill itself reads like a runbook: build the baseline, track these KPIs, scan for these flags, investigate each one before reporting, write the report in this shape, post it to this channel. When it needs a query, it points at the doc rather than pasting the SQL inline.

The split is worth the effort for three reasons. The reference docs are useful outside the skill — those queries are the ones I’d reach for when debugging an alert or doing an ad-hoc analysis, and having them in a known location helps anyone on the team who needs them.

They’re also visible to agents working anywhere in that part of the codebase, loaded into context whenever someone is editing AI cost code rather than only when the skill is active. The same investment pays for both narrow and wide use cases.

And the skill itself stays followable. An agent reading 1,600 lines of mixed runbook and SQL is going to lose the thread, where one reading 400 lines of orchestration with pointers to reference material can keep its place.

The dry-run loop

Once you’ve drafted a skill, you need to test whether it actually works. The author is not a great judge of this — they’re carrying context the skill doesn’t capture: which dataset they meant, which step is load-bearing, what “the usual format” looks like. The best way to find out is to put the skill in front of an agent that has none of that context and see what they make of it.

I do this with a sub-agent specifically because starting a sub-agent resets its context. The sub-agent has only what’s in the skill itself — no prior conversation, no implicit assumptions about what you meant. If a step only worked because of context you were carrying in your head, the sub-agent will fail at it — and that failure is exactly the thing you should have written into the skill but didn’t.

The prompt I use looks something like:

Run the skill at .claude/skills/ai-spend-report/SKILL.md end-to-end against yesterday’s data. Don’t fix anything as you go — just run it as written. When you’re done, report back in three categories: anything ambiguous (places the skill could plausibly be interpreted two ways), anything wrong (queries that errored, paths that didn’t exist, environments that didn’t match — and what you did to work around it), and anything improveable (things you noticed the skill could have done that would have produced a better output).

The first dry-run on a new skill will come back with findings in all three categories, and the second usually does too. Several of the BigQuery hygiene rules in the spend report skill started as findings from runs that went wrong: always qualify column names with table aliases (an ambiguous-column error once cancelled a whole batch of parallel queries), always pass --max_rows=5000 on per-day-by-dimension queries (the default 500-row limit was silently truncating the target day’s data), don’t invent new queries — adapt the ones in the runbook (Claude kept trying to write queries I’d already debugged a better version of). Each one is a permanent skill item that wasn’t in the first draft.

The thing to avoid is treating the dry-run as a one-time pre-launch pass. We use it as a continuous practice: someone on the team runs the spend report each morning as a real piece of work, notices a way the skill could have done better, updates it, and dry-runs the new version before the next morning’s run. Just like in the checklist manifesto, you keep adjusting as you learn more. A skill that hasn’t been edited in months is probably either not being used, or quietly producing worse output than it could.

Removing the human

There’s a useful version of “getting the human out of the loop” with agents: set your success criteria, ask the agent to iterate until it meets them, and review only the final result.

For skill-building, this is really effective. Once you’ve drafted a skill and eyeballed a dry-run or two to make sure the framework is right, I’d recommend asking an agent to dogfood the skill in a loop — run it end-to-end, critique what it produced against the three-category schema, apply fixes to the skill, run it again — and keep going until it has no remaining feedback. The agent runs many cycles of the dry-run loop autonomously, and you only come in to review the converged version.

What makes this work is a clear success criterion. Without the three-category feedback from the previous section (ambiguous, wrong, improveable) the agent has nothing to optimise against, and “iterate until done” doesn’t really mean anything. Once you have a schema, you can hand the optimisation to the agent.

I read The Checklist Manifesto a few years ago and didn’t expect it to be relevant to software work, let alone AI. It turns out the shape carries over almost unchanged.

If you liked this post and want to see more, follow me on LinkedIn.